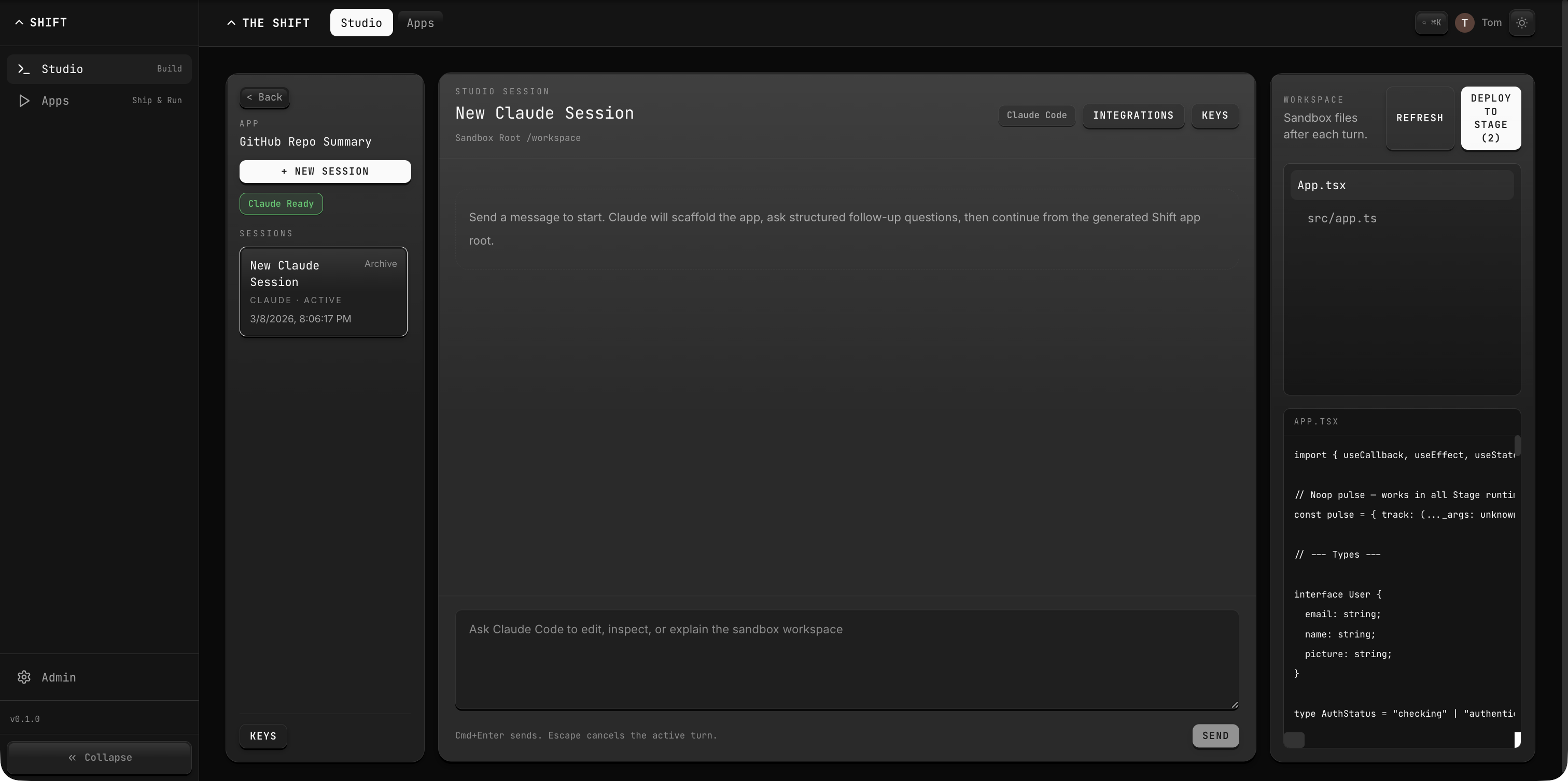

Published API Backends

Published Stage apps can expose server-side API routes through the gateway. These backends run in an isolated module environment with access to three virtual modules that provide in-process access to platform services without external HTTP calls.

How It Works

When a Stage app is deployed with a backend entrypoint (e.g., /app/src/app.ts), the gateway compiles it with Sucrase and executes it in a sandboxed module loader. The backend must export a Hono app or a compatible handler with a .fetch(request) method.

Client → Gateway /stage/:appSid/api/* → Published API Backend

├─ @stage/inference (AI inference)

├─ @stage/compute (Convex functions)

└─ @stage/utils (retry + batching)

Requests are routed to /:appSid/api and /:appSid/api/*. The gateway strips the prefix before forwarding to your handler, so your routes start from /.

Virtual Modules

@stage/inference

Provides an AI inference client tied to the current session. All calls are routed through the platform's Inference service with automatic session attribution.

import { inference } from "@stage/inference";

// Chat completion

const chat = await inference.chat({

model: "gpt-4o-mini",

messages: [{ role: "user", content: "Summarize this data" }],

});

// Text embeddings

const embeddings = await inference.embed({

model: "text-embedding-3-small",

input: "Quarterly revenue forecast",

});

// Image generation

const image = await inference.image({

model: "gpt-image-1",

prompt: "Architecture diagram of a workflow runner",

});

// Usage reporting

const usage = await inference.usage({ provider: "openai-prod" });

Use a model that is confirmed in the current environment or the provider's configured default. Do not assume Anthropic model ids are available everywhere.

Methods:

| Method | Description |

|---|---|

inference.chat(req) | Chat completion with any registered model |

inference.embed(req) | Generate text embeddings |

inference.image(req) | Generate images from prompts |

inference.usage(filters?) | Query usage metrics for the session |

All methods return unwrapped response data. Errors throw a standard Error with the message from the platform response.

@stage/compute

Provides a Convex-compatible client for calling compute functions deployed to the app's module.

import { compute } from "@stage/compute";

// Query data

const items = await compute.query("tasks", "list", { status: "active" });

// Mutate data

await compute.mutation("tasks", "create", {

title: "Review PR",

assignee: "agent@the-shift.dev",

});

// Run an action (side-effectful)

await compute.action("notifications", "send", {

to: "team@example.com",

message: "Deploy complete",

});

Methods:

| Method | Parameters | Description |

|---|---|---|

compute.query(module, fn, args?) | Module name, function name, optional args object | Read-only query |

compute.mutation(module, fn, args?) | Module name, function name, optional args object | Read-write mutation |

compute.action(module, fn, args?) | Module name, function name, optional args object | Side-effectful action |

Module naming: Compute module names must follow snake_case format matching ^[a-z][a-z0-9_]*$. The gateway validates this at deploy time.

@stage/utils

Provides resilient HTTP and concurrency utilities for backend operations.

import { fetchWithRetry, batchedMap } from "@stage/utils";

// Fetch with automatic retry on 429/5xx

const response = await fetchWithRetry(

"https://api.example.com/data",

{ method: "GET", headers: { Authorization: "Bearer ..." } },

5, // max retries (default: 3)

);

// Process items with bounded concurrency

const results = await batchedMap(

urls,

async (url) => {

const res = await fetch(url);

return res.json();

},

3, // concurrency limit (default: 5)

);

Exports:

| Function | Signature | Description |

|---|---|---|

fetchWithRetry | (url, opts, maxRetries?) → Promise<Response> | Retries on HTTP 429 or 5xx with exponential backoff (2^attempt × 1000ms + jitter) |

batchedMap | (items, fn, concurrency?) → Promise<R[]> | Processes an array through an async function with bounded concurrency, preserving order |

Complete Example

import { Hono } from "hono";

import { inference } from "@stage/inference";

import { compute } from "@stage/compute";

import { fetchWithRetry } from "@stage/utils";

const app = new Hono();

app.get("/health", (c) => c.json({ status: "ok" }));

app.post("/summarize", async (c) => {

const { documentId } = await c.req.json();

// Fetch document from compute

const doc = await compute.query("documents", "get", { id: documentId });

// Summarize with AI

const result = await inference.chat({

model: "gpt-4o-mini",

messages: [

{ role: "user", content: `Summarize: ${doc.content}` },

],

});

// Store the summary

const summary = result.content[0]?.text ?? "";

await compute.mutation("documents", "setSummary", {

id: documentId,

summary,

});

return c.json({ summary });

});

export default app;

Entrypoint Resolution

The gateway looks for a backend entrypoint in this order:

/app/src/app.ts/app/app.tsx/app/src/index.ts/app/index.ts

The exported module must be a Hono app or any object with a .fetch(request) method.

Headers

The gateway injects the following headers into every request forwarded to your backend:

| Header | Value |

|---|---|

X-Stage-App | The app's SID |

X-Stage-Session | The session ID backing this app |

Caching

Published API backends are cached in-memory by app SID. The cache is invalidated when the app's session ID, lastDeployedAt, or updatedAt changes. You can force a cache clear by redeploying the app.